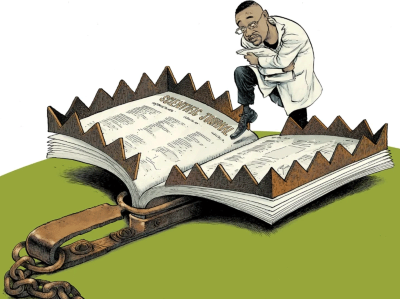

An AI tool can screen thousands of journals, and identify ones that violate quality standards.Credit: PaulPaladin/Alamy

Researchers have identified more than 1,000 potentially problematic open-access journals using an artificial intelligence (AI) tool that screened around 15,000 titles for signs of dubious publishing practices.

The approach, described in Science Advances on 27 August1, could be used to help tackle the rise in what the study authors call “questionable open-access journals” — those that charge fees to publish papers without doing rigorous peer review or quality checks.

Predatory publishers’ latest scam: bootlegged and rebranded papers

None of the journals flagged by the tool has previously been on any kind of watchlist, and some titles are owned by large, reputable publishers. Together, the journals have published hundreds of thousands of research papers that have received millions of citations.

The study suggests that “there’s a whole group of problematic journals in plain sight that are functioning as supposedly respected journals that really don’t deserve that qualification”, says Jennifer Byrne, a research-integrity sleuth and cancer researcher at the University of Sydney, Australia.

The tool is available online in a closed beta version, and organizations that index journals, or publishers, can use it to review their portfolios, says study co-author Daniel Acuña, a computer scientist at the University of Colorado Boulder. But, he adds, the AI sometimes makes mistakes, and is not designed to replace detailed evaluations of journals and individual publications that might result in a title being removed from an index. “A human expert should be part of the vetting process” before any action is taken, he says.

Screening journals

The AI tool can analyse a vast amount of information from journals’ websites and the papers they publish, and search for red flags — such as short turnaround times for publishing articles and high rates of self-citation. It also assesses whether members of a journal’s editorial board are affiliated with well known, reputable research institutions, and checks how transparent publications are about licensing and fees. Several of the criteria used to train the tool come from best-practice guidance developed by the Directory of Open Access Journals (DOAJ), an index of open-access journals run by the non-profit DOAJ Foundation in Roskilde, Denmark.

Cenyu Shen, the DOAJ’s deputy head of editorial quality, who is based in Helsinki, says that the number of problematic journals is rising, and that their “tactics are becoming more sophisticated”. “We are observing more instances where questionable publishers acquire legitimate journals, or where paper mills purchase journals to publish low-quality work,” she adds. (Paper mills are businesses that sell fake papers and authorships.)

I’m worried I’ve been contacted by a predatory publisher — how do I find out?

The DOAJ’s own quality checks on journals are done mostly manually and are initiated only after receiving complaints. In 2024, the directory investigated 473 journals, a rise of 40% compared with 2021. “The time our team spent on these investigations also grew significantly by nearly 30%, to 837 hours,” says Shen.

AI tools could help to speed up some of these assessments, Acuña says. He and his colleagues trained their model on 12,869 journals that are currently indexed in the DOAJ as legitimate, as well as 2,536 that the directory had flagged as violating its quality standards.

When the researchers asked the AI to evaluate 15,191 open-access journals listed in the public database Unpaywall, it identified 1,437 journals as questionable. The team estimated that some 345 of these were mistakenly flagged: they included discontinued titles, book series and journals from small, learned-society publishers. The researchers also found that the tool had failed to flag a further 1,782 questionable journals, based on estimates of error rates.

Source link